Guy beats equipment at Go in human victory about AI

A human player has comprehensively defeated a top-rated AI program at the board match Go, in a surprise reversal of the 2016 laptop or computer victory that was observed as a milestone in the increase of synthetic intelligence.

Kellin Pelrine, an American player who is 1 stage beneath the top newbie position, conquer the machine by taking edge of a earlier mysterious flaw that had been recognized by a different computer system. But the head-to-head confrontation in which he gained 14 of 15 video games was carried out without direct computer system assistance.

The triumph, which has not formerly been documented, highlighted a weak point in the very best Go computer programs that is shared by most of today’s widely employed AI systems, including the ChatGPT chatbot established by San Francisco-based mostly OpenAI.

The strategies that place a human back again on leading on the Go board had been instructed by a laptop plan that experienced probed the AI programs looking for weaknesses. The proposed plan was then ruthlessly delivered by Pelrine.

“It was amazingly uncomplicated for us to exploit this method,” mentioned Adam Gleave, main govt of Much AI, the Californian investigation business that developed the program. The software played extra than 1mn online games from KataGo, 1 of the major Go-playing systems, to uncover a “blind spot” that a human player could choose benefit of, he added.

The profitable technique uncovered by the software package “is not completely trivial but it is not tremendous-difficult” for a human to find out and could be utilized by an intermediate-degree participant to beat the devices, mentioned Pelrine. He also utilised the strategy to earn from an additional top Go method, Leela Zero.

The decisive victory, albeit with the assist of ways recommended by a laptop, comes seven decades soon after AI appeared to have taken an unassailable direct above human beings at what is normally regarded as the most complex of all board games.

AlphaGo, a method devised by Google-owned analysis organization DeepMind, defeated the environment Go champion Lee Sedol by 4 games to a single in 2016. Sedol attributed his retirement from Go 3 yrs later to the rise of AI, expressing that it was “an entity that simply cannot be defeated”. AlphaGo is not publicly out there, but the programs Pelrine prevailed against are considered on a par.

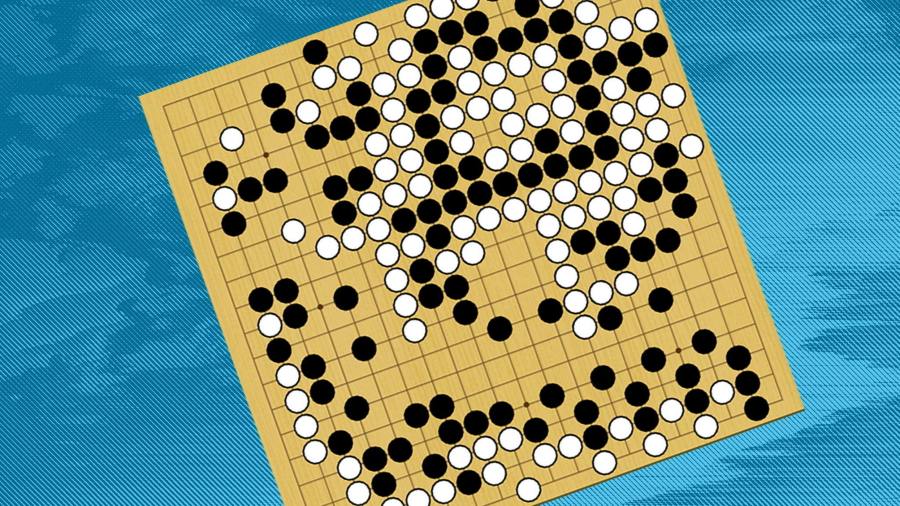

In a sport of Go, two gamers alternately spot black and white stones on a board marked out with a 19×19 grid, searching for to encircle their opponent’s stones and enclose the major quantity of place. The huge range of combinations suggests it is unachievable for a laptop to assess all potential potential moves.

The methods utilized by Pelrine involved slowly stringing collectively a significant “loop” of stones to encircle a person of his opponent’s very own groups, though distracting the AI with moves in other corners of the board. The Go-participating in bot did not detect its vulnerability, even when the encirclement was nearly total, Pelrine reported.

“As a human it would be fairly simple to place,” he extra.

The discovery of a weak point in some of the most advanced Go-taking part in equipment details to a basic flaw in the deep finding out techniques that underpin today’s most advanced AI, reported Stuart Russell, a computer science professor at the University of California, Berkeley.

The units can “understand” only particular scenarios they have been uncovered to in the earlier and are unable to generalise in a way that individuals uncover straightforward, he added.

“It displays when once more we’ve been much too hasty to ascribe superhuman concentrations of intelligence to devices,” Russell explained.

The exact induce of the Go-participating in systems’ failure is a make a difference of conjecture, in accordance to the scientists. 1 probable explanation is that the tactic exploited by Pelrine is almost never used, indicating the AI devices had not been skilled on enough related online games to realise they had been susceptible, explained Gleave.

It is typical to locate flaws in AI units when they are uncovered to the form of “adversarial attack” utilised from the Go-taking part in computer systems, he added. In spite of that, “we’re viewing pretty huge [AI] devices currently being deployed at scale with little verification”.

/cloudfront-us-east-1.images.arcpublishing.com/gray/MZZ6VZA235A7XOAVDRAO3AOUWQ.jpg)