Figures for very good, designed by artificial intelligence | MIT Information

As it results in being less complicated to build hyper-real looking digital figures working with synthetic intelligence, a lot of the discussion all around these applications has centered on deceptive and perhaps perilous deepfake information. But the engineering can also be utilized for optimistic functions — to revive Albert Einstein to train a physics class, converse by way of a vocation adjust with your more mature self, or anonymize folks even though preserving facial communication.

To inspire the technology’s positive options, MIT Media Lab scientists and their collaborators at the University of California at Santa Barbara and Osaka College have compiled an open up-source, effortless-to-use character era pipeline that combines AI versions for facial gestures, voice, and motion and can be utilised to generate a variety of audio and video outputs.

The pipeline also marks the ensuing output with a traceable, as effectively as human-readable, watermark to distinguish it from authentic video clip written content and to display how it was created — an addition to assistance stop its malicious use.

By producing this pipeline very easily out there, the scientists hope to inspire lecturers, students, and health-treatment personnel to explore how these types of equipment can enable them in their respective fields. If a lot more college students, educators, health and fitness-care employees, and therapists have a prospect to build and use these figures, the success could improve well being and perfectly-staying and add to personalized schooling, the scientists create in Mother nature Machine Intelligence.

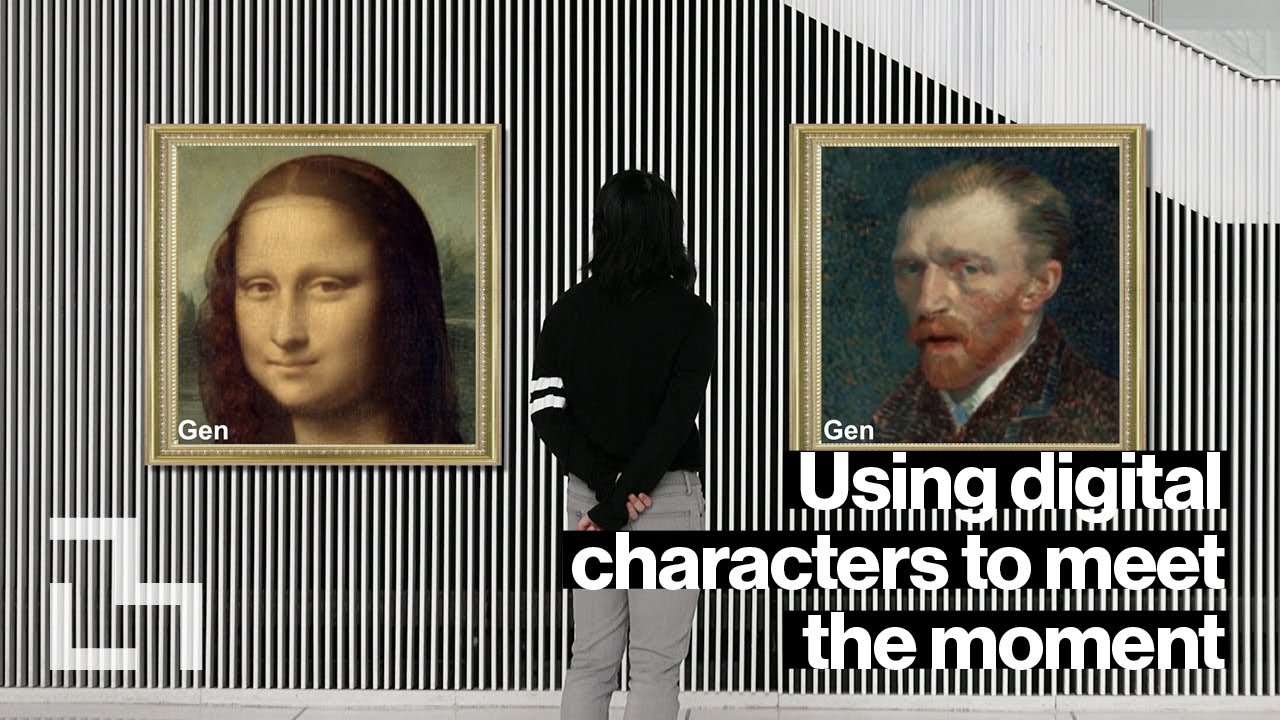

AI-generated characters can be used for good functions like maximizing academic articles, preserving privateness in delicate conversations without erasing non-verbal cues, and enabling buyers to interact with helpful animated figures in probably stressful conditions. Video: Jimmy Day / MIT Media Lab

“It will be a bizarre planet certainly when AIs and humans start off to share identities. This paper does an incredible occupation of imagined management, mapping out the space of what is doable with AI-produced people in domains ranging from schooling to health to close interactions, even though offering a tangible roadmap on how to stay away from the moral troubles about privateness and misrepresentation,” states Jeremy Bailenson, founding director of the Stanford Virtual Human Interaction Lab, who was not linked with the review.

Though the planet typically knows the technology from deepfakes, “we see its prospective as a tool for inventive expression,” states the paper’s first creator Pat Pataranutaporn, a PhD scholar in professor of media engineering Pattie Maes’ Fluid Interfaces exploration team.

Other authors on the paper contain Maes Fluid Interfaces master’s university student Valdemar Danry and PhD university student Joanne Leong Media Lab Investigation Scientist Dan Novy Osaka University Assistant Professor Parinya Punpongsanon and College of California at Santa Barbara Assistant Professor Misha Sra.

Deeper truths and deeper understanding

Generative adversarial networks, or GANs, a combination of two neural networks that contend from just about every other, have produced it a lot easier to build photorealistic images, clone voices, and animate faces. Pataranutaporn, with Danry, 1st explored its opportunities in a project named Machinoia, the place he created a number of substitute representations of himself — as a child, as an outdated gentleman, as feminine — to have a self-dialogue of everyday living decisions from diverse views. The uncommon deepfaking expertise designed him informed of his “journey as a individual,” he says. “It was deep real truth — to uncover something about you you have hardly ever assumed of right before, utilizing your personal facts on your individual self.”

Self-exploration is only 1 of the constructive programs of AI-created figures, the scientists say. Experiments display, for instance, that these people can make college students far more enthusiastic about discovering and improve cognitive endeavor performance. The technological innovation presents a way for instruction to be “personalized to your interest, your idols, your context, and can be improved more than time,” Pataranutaporn points out, as a enhance to common instruction.

For instance, the MIT scientists used their pipeline to build a artificial model of Johann Sebastian Bach, which experienced a reside discussion with renowned cellist Yo Yo Ma in Media Lab Professor Tod Machover’s musical interfaces course — to the delight of both of those the college students and Ma.

Other programs could possibly involve people who assist supply therapy, to ease a rising shortage of psychological health professionals and achieve the estimated 44 per cent of Individuals with psychological wellbeing issues who by no means get counseling, or AI-produced content material that provides exposure treatment to people with social panic. In a related use situation, the technology can be used to anonymize faces in online video while preserving facial expressions and emotions, which could be beneficial for classes where by men and women want to share individually sensitive info these types of as well being and trauma ordeals, or for whistleblowers and witness accounts.

But there are also additional creative and playful use conditions. In this fall’s Experiments in Deepfakes course, led by Maes and analysis affiliate Roy Shilkrot, learners utilised the technologies to animate the figures in a historical Chinese painting and to generate a relationship “breakup simulator,” among the other assignments.

Authorized and ethical difficulties

Many of the applications of AI-produced characters increase authorized and ethical difficulties that must be talked over as the know-how evolves, the scientists take note in their paper. For occasion, how will we decide who has the correct to digitally recreate a historical character? Who is legally liable if an AI clone of a popular person encourages damaging behavior on the net? And is there any threat that we will favor interacting with synthetic characters above humans?

“One of our goals with this investigation is to raise recognition about what is probable, inquire thoughts and start out general public discussions about how this technologies can be utilized ethically for societal gain. What complex, authorized, plan and instructional actions can we consider to promote favourable use situations when lowering the probability for damage?” states Maes.

By sharing the know-how extensively, although plainly labeling it as synthesized, Pataranutaporn suggests, “we hope to encourage additional resourceful and beneficial use cases, when also educating individuals about the technology’s potential benefits and harms

/cloudfront-us-east-1.images.arcpublishing.com/gray/MZZ6VZA235A7XOAVDRAO3AOUWQ.jpg)