Artificial Media: How deepfakes could before long modify our earth – 60 Minutes

You may perhaps hardly ever have read the phrase “synthetic media”— much more commonly identified as “deepfakes”— but our army, law enforcement and intelligence companies surely have. They are hyper-practical video clip and audio recordings that use artificial intelligence and “deep” mastering to create “faux” information or “deepfakes.” The U.S. governing administration has developed increasingly involved about their possible to be made use of to unfold disinformation and commit crimes. That is because the creators of deepfakes have the electrical power to make persons say or do something, at the very least on our screens. Most Individuals have no strategy how significantly the technology has occur in just the very last 4 yrs or the danger, disruption and opportunities that appear with it.

Deepfake Tom Cruise: You know I do all my have stunts, certainly. I also do my have new music.

Chris Ume/Metaphysic

This is not Tom Cruise. It’s a single of a collection of hyper-realistic deepfakes of the film star that commenced appearing on the video clip-sharing application TikTok earlier this 12 months.

Deepfake Tom Cruise: Hey, what’s up TikTok?

For days people today questioned if they were serious, and if not, who experienced created them.

Deepfake Tom Cruise: It truly is important.

At last, a modest, 32-calendar year-old Belgian visible effects artist named Chris Umé, stepped forward to assert credit rating.

Chris Umé: We believed as extensive as we’re making obvious this is a parody, we are not performing just about anything to damage his impression. But immediately after a number of movies, we understood like, this is blowing up we are getting tens of millions and tens of millions and hundreds of thousands of sights.

Umé claims his function is made much easier since he teamed up with a Tom Cruise impersonator whose voice, gestures and hair are just about similar to the authentic McCoy. Umé only deepfakes Cruise’s encounter and stitches that onto the serious video clip and audio of the impersonator.

Deepfake Tom Cruise: Which is exactly where the magic transpires.

For technophiles, DeepTomCruise was a tipping stage for deepfakes.

Deepfake Tom Cruise: Nevertheless received it.

Bill Whitaker: How do you make this so seamless?

Chris Umé: It begins with coaching a deepfake product, of program. I have all the face angles of Tom Cruise, all the expressions, all the feelings. It will take time to produce a really fantastic deepfake product.

Invoice Whitaker: What do you signify “education the product?” How do you teach your computer system?

Chris Umé: “Training” suggests it can be likely to review all the photos of Tom Cruise, all his expressions, in comparison to my impersonator. So the computer’s gonna teach alone: When my impersonator is smiling, I am gonna recreate Tom Cruise smiling, and which is, that is how you “train” it.

Chris Ume/Metaphysic

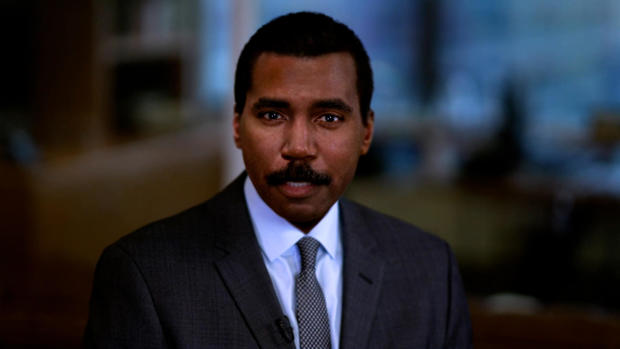

Making use of video from the CBS Information archives, Chris Umé was in a position to train his laptop to understand each part of my face, and wipe absent the many years. This is how I looked 30 yrs back. He can even get rid of my mustache. The options are unlimited and a tiny scary.

Chris Umé: I see a large amount of faults in my perform. But I really don’t mind it, essentially, because I really don’t want to fool people. I just want to exhibit them what is achievable.

Bill Whitaker: You do not want to idiot people today.

Chris Umé: No. I want to entertain folks, I want to elevate awareness, and I want

and I want to clearly show wherever it truly is all heading.

Nina Schick: It is without having a question a single of the most essential revolutions in the long term of human communication and perception. I would say it really is analogous to the start of the world wide web.

Political scientist and technologies specialist Nina Schick wrote 1 of the 1st publications on deepfakes. She to start with came throughout them four yrs back when she was advising European politicians on Russia’s use of disinformation and social media to interfere in democratic elections.

Monthly bill Whitaker: What was your reaction when you to start with realized this was probable and was going on?

Nina Schick: Effectively, offered that I was coming at it from the standpoint of disinformation and manipulation in the context of elections, the truth that AI can now be applied to make images and video that are pretend, that glimpse hyper practical. I considered, nicely, from a disinformation perspective, this is a match-changer.

So far, there is certainly no evidence deepfakes have “modified the activity” in a U.S. election, but previously this calendar year the FBI put out a notification warning that “Russian [and] Chinese… actors are utilizing artificial profile images” — developing deepfake journalists and media personalities to distribute anti-American propaganda on social media.

The U.S. armed forces, law enforcement and intelligence businesses have kept a wary eye on deepfakes for a long time. At a 2019 listening to, Senator Ben Sasse of Nebraska asked if the U.S. is ready for the onslaught of disinformation, fakery and fraud.

Ben Sasse: When you consider about the catastrophic probable to community have confidence in and to marketplaces that could come from deepfake assaults, are we organized in a way that we could perhaps reply quick sufficient?

Dan Coats: We evidently need to have to be much more agile. It poses a key menace to the United States and anything that the intelligence community wants to be restructured to address.

Due to the fact then, technological know-how has continued relocating at an exponential rate while U.S. policy has not. Attempts by the government and significant tech to detect synthetic media are competing with a community of “deepfake artists” who share their most current creations and approaches on the internet.

Like the web, the to start with position deepfake technological innovation took off was in pornography. The unhappy truth is the the vast majority of deepfakes today consist of women’s faces, mostly superstars, superimposed onto pornographic films.

Nina Schick: The to start with use case in pornography is just a harbinger of how deepfakes can be applied maliciously in numerous distinct contexts, which are now setting up to arise.

Monthly bill Whitaker: And they’re obtaining superior all the time?

Nina Schick: Of course. The extraordinary detail about deepfakes and synthetic media is the tempo of acceleration when it comes to the technology. And by five to seven many years, we are fundamentally seeking at a trajectory exactly where any single creator, so a YouTuber, a TikToker, will be in a position to produce the same stage of visible consequences that is only obtainable to the most very well-resourced Hollywood studio now.

Chris Ume/Metaphysic

The know-how behind deepfakes is artificial intelligence, which mimics the way individuals learn. In 2014, researchers for the initially time utilised computer systems to generate realistic-searching faces using one thing called “generative adversarial networks,” or GANs.

Nina Schick: So you established up an adversarial video game where you have two AIs combating each other to attempt and generate the ideal fake artificial written content. And as these two networks fight each individual other, one particular making an attempt to make the finest graphic, the other making an attempt to detect wherever it could be much better, you basically conclusion up with an output that is ever more enhancing all the time.

Schick says the power of generative adversarial networks is on complete display screen at a internet site referred to as “ThisPersonDoesNotExist.com”

Nina Schick: Just about every time you refresh the webpage, there’s a new impression of a individual who does not exist.

Each individual is a just one-of-a-variety, entirely AI-created graphic of a human staying who in no way has, and under no circumstances will, walk this Earth.

Nina Schick: You can see each individual pore on their confront. You can see every single hair on their head. But now imagine that technological know-how remaining expanded out not only to human faces, in still illustrations or photos, but also to online video, to audio synthesis of people’s voices and that is really where we’re heading proper now.

Monthly bill Whitaker: This is head-blowing.

Nina Schick: Indeed. [Laughs]

Invoice Whitaker: What is the good side of this?

Nina Schick: The technological know-how itself is neutral. So just as terrible actors are, without a question, heading to be applying deepfakes, it is also going to be employed by very good actors. So initial of all, I would say that there is certainly a very powerful case to be produced for the commercial use of deepfakes.

Victor Riparbelli is CEO and co-founder of Synthesia, primarily based in London, a person of dozens of providers employing deepfake technological know-how to change movie and audio productions.

Victor Riparbelli: The way Synthesia operates is that we have primarily changed cameras with code, and the moment you happen to be performing with software, we do a lotta issues that you wouldn’t be ready to do with a ordinary camera. We’re however really early. But this is gonna be a essential transform in how we develop media.

Synthesia can make and sells “digital avatars,” making use of the faces of paid out actors to supply customized messages in 64 languages… and will allow company CEOs to address personnel overseas.

Snoop Dogg: Did anyone say, Just Eat?

Synthesia has also aided entertainers like Snoop Dogg go forth and multiply. This elaborate Tv commercial for European food shipping and delivery service Just Consume price a fortune.

Snoop Dogg: J-U-S-T-E-A-T-…

Victor Riparbelli: Just Consume has a subsidiary in Australia, which is called Menulog. So what we did with our know-how was we switched out the word Just Take in for Menulog.

Snoop Dogg: M-E-N-U-L-O-G… Did anyone say, “MenuLog?”

Victor Riparbelli: And all of a sudden they had a localized edition for the Australian market with out Snoop Dogg having to do something.

Invoice Whitaker: So he will make twice the cash, huh?

Victor Riparbelli: Yeah.

All it took was 8 minutes of me looking at a script on digicam for Synthesia to develop my synthetic conversing head, complete with my gestures, head and mouth actions. Another firm, Descript, utilised AI to make a artificial version of my voice, with my cadence, tenor and syncopation.

Deepfake Invoice Whitaker: This is the result. The words and phrases you’re hearing were in no way spoken by the real Bill into a microphone or to a digital camera. He basically typed the text into a computer system and they occur out of my mouth.

It may well glance and sound a tiny tough all around the edges correct now, but as the know-how increases, the options of spinning text and photos out of thin air are infinite.

Deepfake Bill Whitaker: I’m Invoice Whitaker. I’m Bill Whitaker. I’m Monthly bill Whitaker.

Bill Whitaker: Wow. And the head, the eyebrows, the mouth, the way it moves.

Victor Riparbelli: It can be all artificial.

Bill Whitaker: I could be lounging at the seaside. And say, “People– you know, I am not gonna occur in today. But you can use my avatar to do the function.”

Victor Riparbelli: Maybe in a couple yrs.

Invoice Whitaker: Do not notify me that. I would be tempted.

Tom Graham: I believe it will have a major impact.

The speedy advances in synthetic media have triggered a virtual gold hurry. Tom Graham, a London-based mostly attorney who produced his fortune in cryptocurrency, a short while ago started a enterprise referred to as Metaphysic with none other than Chris Umé, creator of DeepTomCruise. Their target: produce program to let any individual to produce hollywood-caliber movies without having lights, cameras, or even actors.

Tom Graham: As the hardware scales and as the designs grow to be much more productive, we can scale up the size of that design to be an full Tom Cruise overall body, movement and all the things.

Invoice Whitaker: Nicely, talk about disruptive. I indicate, are you gonna place actors out of positions?

Tom Graham: I consider it is a fantastic thing if you might be a well-recognized actor right now simply because you may possibly be in a position to allow anyone obtain facts for you to create a variation of oneself in the long term where by you could be performing in motion pictures after you have deceased. Or you could be the director, directing your young self in a film or anything like that.

If you are wanting to know how all of this is authorized, most deepfakes are regarded as protected totally free speech. Attempts at legislation are all about the map. In New York, professional use of a performer’s synthetic likeness with out consent is banned for 40 yrs right after their loss of life. California and Texas prohibit misleading political deepfakes in the lead-up to an election.

Nina Schick: There are so numerous moral, philosophical gray zones right here that we seriously need to have to assume about.

Monthly bill Whitaker: So how do we as a modern society grapple with this?

Nina Schick: Just comprehension what is actually likely on. Due to the fact a good deal of men and women nevertheless you should not know what a deepfake is, what synthetic media is, that this is now probable. The counter to that is, how do we inoculate ourselves and fully grasp that this form of information is coming and exists without having getting wholly cynical? Suitable? How do we do it without getting rid of have faith in in all authentic media?

Which is likely to call for all of us to figure out how to maneuver in a environment where seeing is not normally believing.

Produced by Graham Messick and Jack Weingart. Broadcast associate, Emilio Almonte. Edited by Richard Buddenhagen.