AI cuts, flows and goes eco-friendly – TechCrunch

Research in the discipline of machine finding out and AI, now a important technologies in nearly each and every field and firm, is far also voluminous for any individual to go through it all. This column aims to gather some of the most applicable recent discoveries and papers — significantly in, but not constrained to, artificial intelligence — and describe why they make any difference.

This week AI apps have been observed in quite a few unpredicted niches due to its capability to sort via massive amounts of details, or alternatively make wise predictions based mostly on restricted evidence.

We’ve found equipment finding out types taking on significant datasets in biotech and finance, but scientists at ETH Zurich and LMU Munich are making use of comparable tactics to the information created by worldwide enhancement assist tasks this sort of as catastrophe aid and housing. The staff qualified its product on millions of jobs (amounting to $2.8 trillion in funding) from the very last 20 years, an enormous dataset that is way too elaborate to be manually analyzed in depth.

“You can consider of the procedure as an endeavor to go through an entire library and kind related publications into topic-specific cabinets. Our algorithm takes into account 200 different dimensions to identify how similar these 3.2 million tasks are to just about every other — an not possible workload for a human remaining,” mentioned research creator Malte Toetzke.

Very prime-level tendencies recommend that investing on inclusion and diversity has amplified, while climate paying has, surprisingly, reduced in the past number of a long time. You can study the dataset and traits they analyzed here.

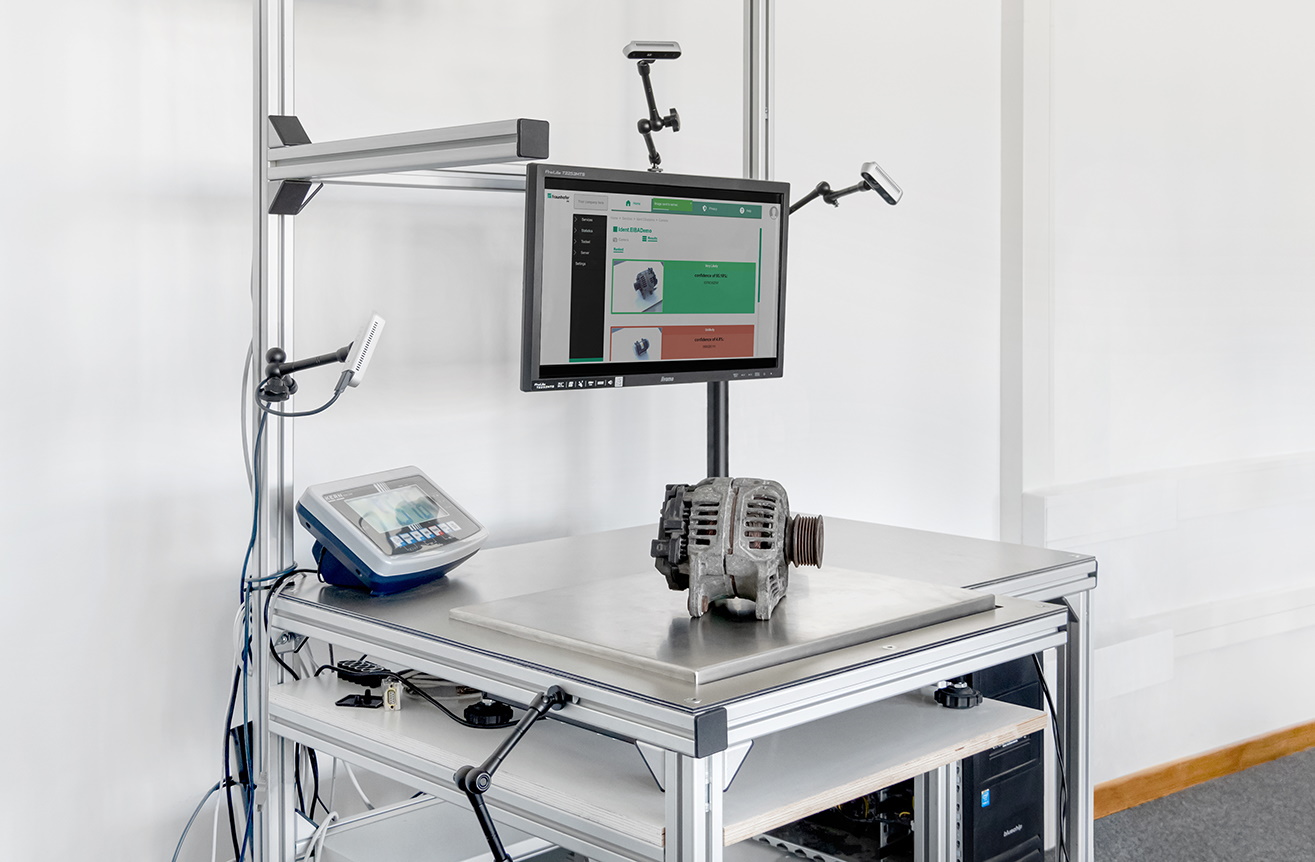

Yet another location several men and women believe about is the significant range of device sections and factors that are manufactured by different industries at an monumental clip. Some can be reused, some recycled, other folks must be disposed of responsibly — but there are far too lots of for human professionals to go by. German R&D outfit Fraunhofer has created a device discovering product for pinpointing pieces so they can be place to use in its place of heading to the scrap property.

Impression Credits: Fraunhofer

The program relies on extra than regular camera views, considering the fact that sections could glance identical but be really distinct, or be identical mechanically but vary visually owing to rust or use. So every single portion is also weighed and scanned by 3D cameras, and metadata like origin is also bundled. The design then indicates what it thinks the section is so the human inspecting it does not have to start off from scratch. It is hoped that tens of 1000’s of pieces will before long be saved, and the processing of thousands and thousands accelerated, by applying this AI-assisted identification method.

Physicists have discovered an interesting way to bring ML’s attributes to bear on a centuries-outdated issue. Fundamentally scientists are constantly wanting for approaches to present that the equations that govern fluid dynamics (some of which, like Euler’s, date to the 18th century) are incomplete — that they crack at particular intense values. Using common computational techniques this is hard to do, though not difficult. But researchers at CIT and Hold Seng College in Hong Kong propose a new deep understanding method to isolate likely circumstances of fluid dynamics singularities, although other people are making use of the method in other ways to the industry. This Quanta report points out this interesting improvement really effectively.

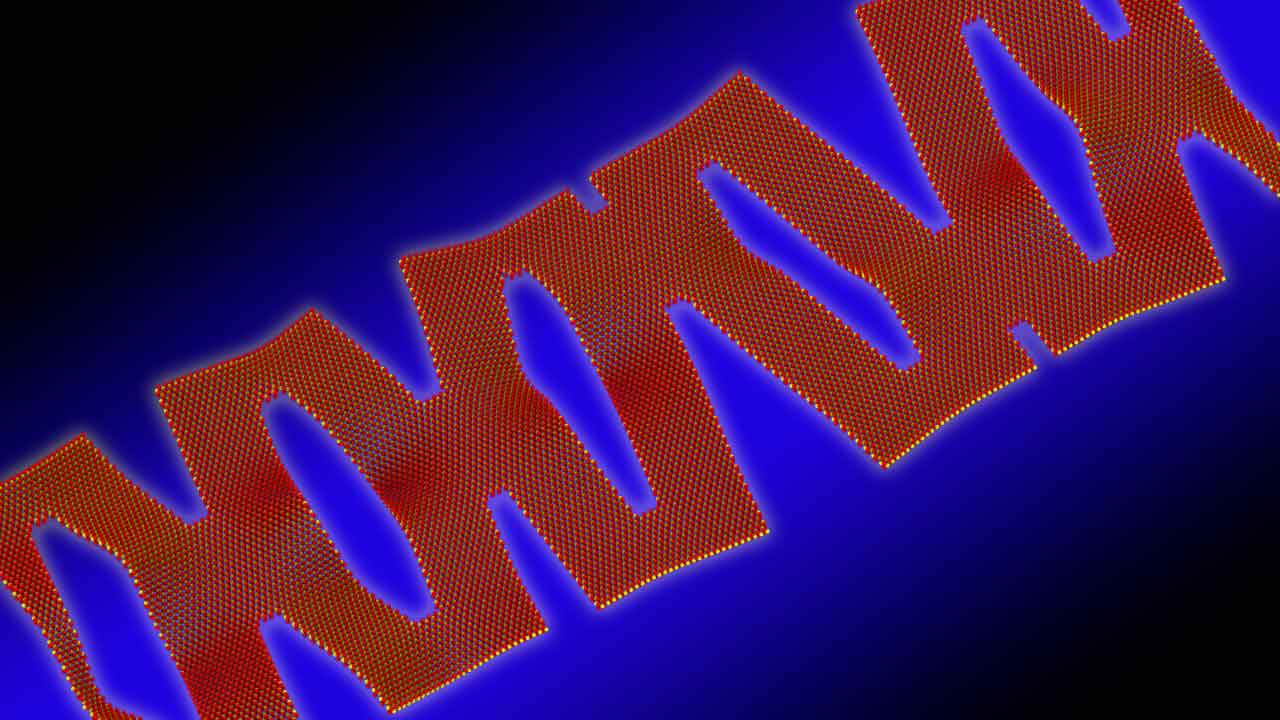

Yet another hundreds of years-outdated concept receiving an ML layer is kirigami, the art of paper-reducing that several will be familiar with in the context of building paper snowflakes. The procedure goes back again hundreds of years in Japan and China in certain, and can deliver remarkably intricate and flexible buildings. Researchers at Argonne National Labs took inspiration from the notion to theorize a 2D content that can retain electronics at microscopic scale but also flex simply.

The staff had been performing tens of hundreds of experiments with a person-6 cuts manually, and applied that information to prepare the design. They then utilized a Department of Power supercomputer to execute simulations down to the molecular amount. In seconds it generated a 10-slash variation with 40% stretchability, significantly past what the workforce had envisioned or even experimented with on their individual.

Graphic Credits: Argonne Countrywide Labs

“It has figured out things we hardly ever instructed it to figure out. It discovered some thing the way a human learns and used its know-how to do a thing distinctive,” claimed project lead Pankaj Rajak. The achievement has spurred them to boost the complexity and scope of the simulation.

Yet another attention-grabbing extrapolation carried out by a specifically trained AI has a pc eyesight design reconstructing color facts from infrared inputs. Commonly a camera capturing IR would not know just about anything about what color an object was in the noticeable spectrum. But this experiment observed correlations in between particular IR bands and obvious kinds, and made a model to change pictures of human faces captured in IR into ones that approximate the obvious spectrum.

It is still just a evidence of principle, but this sort of spectrum flexibility could be a helpful resource in science and images.

—

Meanwhile, a new examine co-authored by Google AI guide Jeff Dean pushes again towards the idea that AI is an environmentally high-priced endeavor, owing to its higher compute prerequisites. Even though some analysis has discovered that teaching a massive design like OpenAI’s GPT-3 can make carbon dioxide emissions equivalent to that of a small neighborhood, the Google-affiliated examine contends that “following very best practices” can minimize equipment finding out carbon emissions up to 1000x.

The practices in dilemma concern the forms of styles employed, the devices made use of to educate styles, “mechanization” (e.g. computing in the cloud compared to on local computers) and “map” (picking facts heart spots with the cleanest vitality). According to the coauthors, choosing “efficient” versions on your own can minimize computation by aspects of five to 10, even though using processors optimized for machine understanding education, these types of as GPUs, can increase the overall performance-for each-Watt ratio by variables of two to 5.

Any thread of analysis suggesting that AI’s environmental effects can be lessened is cause for celebration, without a doubt. But it need to be pointed out that Google isn’t a neutral occasion. Several of the company’s goods, from Google Maps to Google Search, rely on versions that expected significant amounts of vitality to create and run.

Mike Prepare dinner, a member of the Knives and Paintbrushes open up study team, factors out that — even if the study’s estimates are precise — there simply isn’t a very good reason for a enterprise not to scale up in an energy-inefficient way if it rewards them. Although educational teams could possibly fork out awareness to metrics like carbon impact, organizations are not as incentivized in the similar way — at least at the moment.

“The entire explanation we’re getting this conversation to get started with is that providers like Google and OpenAI experienced proficiently infinite funding, and selected to leverage it to make styles like GPT-3 and BERT at any cost, due to the fact they realized it gave them an advantage,” Cook dinner advised TechCrunch via e-mail. “All round, I consider the paper states some great stuff and it’s terrific if we’re thinking about efficiency, but the challenge isn’t a specialized 1 in my impression — we know for a reality that these providers will go massive when they need to, they won’t restrain on their own, so declaring this is now solved forever just feels like an empty line.”

The previous subject for this 7 days is not really about machine learning specifically, but relatively what may well be a way forward in simulating the brain in a far more immediate way. EPFL bioinformatics researchers created a mathematical design for creating tons of unique but accurate simulated neurons that could at some point be utilised to create electronic twins of neuroanatomy.

“The findings are previously enabling Blue Mind to make biologically comprehensive reconstructions and simulations of the mouse brain, by computationally reconstructing mind regions for simulations which replicate the anatomical qualities of neuronal morphologies and incorporate region precise anatomy,” explained researcher Lida Kanari.

Really don’t anticipate sim-brains to make for improved AIs — this is quite a great deal in pursuit of developments in neuroscience — but most likely the insights from simulated neuronal networks could guide to essential improvements to the knowledge of the processes AI seeks to imitate digitally.

/cloudfront-us-east-1.images.arcpublishing.com/gray/MZZ6VZA235A7XOAVDRAO3AOUWQ.jpg)